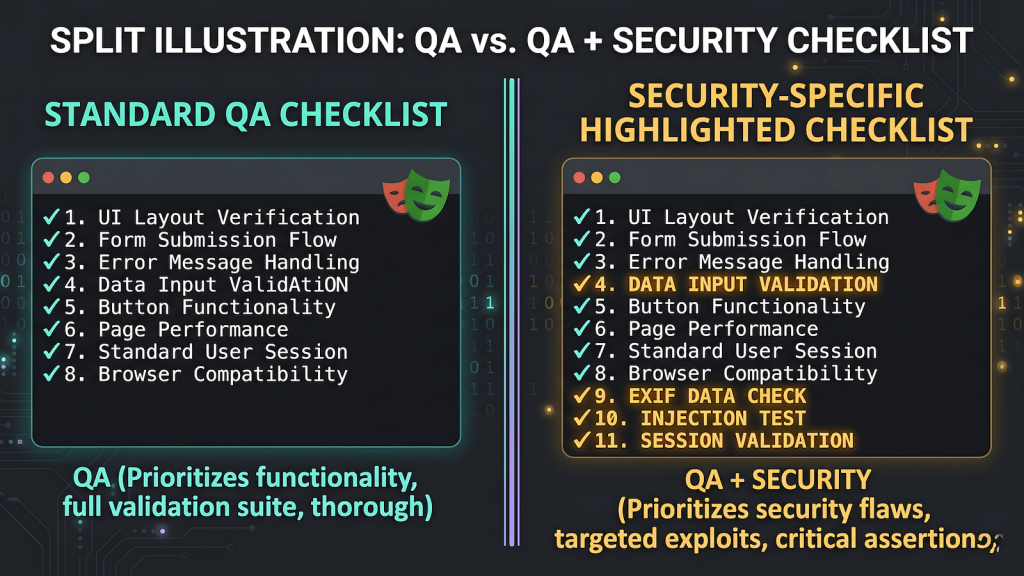

Security testing is usually framed as a separate discipline with its own titles, certifications, and tooling. Security engineers, penetration testers, bug bounty hunters, AppSec teams, the expectation is that security is someone else’s domain and QA engineers test functionality. In practice the boundary is porous in ways that matter for careers and coverage. Security testing for QA engineers is not a specialization that requires a credential before you can participate. It is a framing that applies to work many QA engineers are already doing.

QA engineers interact with every surface of a system: forms, file uploads, authentication flows, API endpoints, session handling, input validation. Anyone testing those surfaces with enough care is doing security-adjacent work whether they recognize it as that or not. This post makes explicit what that looks like in practice, organized around specific test cases rather than theory.

EXIF Data on Image Uploads

When a user uploads an image, that image may contain EXIF metadata, GPS coordinates, device make and model, timestamps. A functional QA pass on an image upload feature checks that the upload succeeds, the image renders, and file type validation works. A security-aware QA pass also checks whether the uploaded image is served back to other users with EXIF data still embedded.

GPS coordinates in a profile photo or forum post image are a geo-targeting vulnerability. If the platform serves the original file without stripping the metadata, anyone who downloads that image gets the exact coordinates of wherever the photo was taken. Testing for this requires uploading an image with known EXIF data, any photo taken on a phone with location services enabled and then checking the served file. This is not a penetration testing skill. It is a thorough upload test that most functional QA coverage misses.

Input Fields and Injection Testing

SQL injection testing is typically framed as a security specialty. The mechanics are within reach of any QA engineer who understands what correct input handling looks like. Submitting a single quote character to a text field and watching what the application returns is basic input validation testing. A 500 response with a database error string visible in the body is a failing test. A clean rejection with a validation message is a passing one.

The same principle extends to other injection vectors. Angle brackets in a text field test for reflected XSS, if the characters are echoed back unescaped in the rendered page, the application is vulnerable. A payload like '; DROP TABLE users; -- in a search field tests whether user input is being parameterized in database queries. You do not need to know how to exploit these vulnerabilities to write the test cases. You need to know what correct behavior looks like graceful rejection, proper escaping, no raw database errors in the response, which is the QA baseline.

Context-Aware Threat Modeling

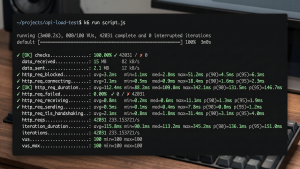

Not every application surface has the same security requirements, and treating them as if they do is its own kind of failure. A public-facing user registration form has different input validation requirements than an internal CMS accessible only to three administrators behind a VPN. Over-engineering the internal tool wastes time. Under-protecting the public form creates real exposure.

Security testing for QA engineers at this level means writing different test plans for surfaces with different threat profiles. The test cases for a public API endpoint should include malformed payloads, authentication bypass attempts, and rate limiting verification. The test cases for an internal tool have a narrower threat model and correspondingly narrower coverage. Recognizing the difference and documenting it in the test plan is security thinking applied to QA work not a specialization, just accurate testing.

Login Forms and Session Security

Login forms are high-value targets and QA coverage on them tends to stay shallow. Functional coverage checks that valid credentials succeed, invalid credentials fail, and account lockout triggers after a threshold of failed attempts. That is the minimum. Security-aware QA adds a layer of test cases that are still within the QA skill set.

Does the email field reject query strings and injection payloads after validation not just empty or malformed email syntax, but active injection attempts? Does the form transmit over HTTPS regardless of how the page was accessed? Does the session token rotate after a successful login rather than carrying over the pre-authentication token? Does the server-side session actually invalidate when logout is called, or does the token remain valid after the client clears it? These are test cases, not exploits. Writing them requires knowing what a secure login implementation looks like, not knowing how to attack an insecure one.

Making the Work Visible

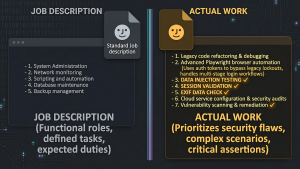

The invisible version of this, doing security-aware testing without ever naming it as such is common among QA engineers who have been doing the work long enough to develop instincts. The problem is that invisible work does not build a career. It does not appear on a resume. It does not get recognized in performance reviews. It does not transfer clearly to the next role.

If you are writing test cases that cover injection inputs, checking uploads for metadata exposure, and varying your test plan based on the threat profile of the surface you are testing, you are doing security QA. The HackerOne post in this cluster, I Was a Manual Tester Who Handled HackerOne Tickets makes the same argument from the career framing side. The technical content is already there. Name it accurately and it becomes legible.

0 thoughts on “Security QA Without the Security Title”