Testing AI-generated code forced a rethink of what a QA engineer actually does. Not because the fundamentals changed (bugs are still bugs, and bad UX is still bad UX) but because the dev loop changed so dramatically that the QA role had to expand to fill the gap. When an AI agent is shipping fixes faster than a traditional developer ever could, the human verification layer stops being a checkpoint and starts being the entire quality infrastructure. One person. Full coverage. Manual instincts, automation thinking, security awareness, Scrum-adjacent loop management, and enough SDET muscle to know when a fix drifted from the ticket’s intent.

This is the workflow I run on an active QA retainer for a SaaS product built and maintained by an AI dev agent. I’m going to document it in enough detail that you can use it: not as inspiration, but as an actual operating system for the hybrid QA role. AI doesn’t replace junior QAs. It upgrades them. But only if they know how to work the loop.

What the Role Actually Looks Like Now

The noise around AI and QA tends to go one of two directions: either AI is going to replace testers entirely, or QA is just using AI as a smarter search engine. Both are wrong, and both miss what’s actually happening on teams where AI is doing the development work.

When an AI agent is in the dev seat, the QA engineer becomes the only human who touches the product with user intent. That’s not a reduced role. It’s an expanded one. In a single engagement you’re running:

Manual testing: Exploratory coverage, UX judgment, context-aware path testing that no automation catches. You’re the only one in the loop asking whether the product makes sense to a real user, not just whether it functions.

Automation thinking: Knowing which surfaces are regression risks, which fixes need a scripted check next cycle, and where the agent’s output patterns create predictable failure modes worth automating against.

SDET instincts: Reading commit output, understanding what the fix actually changed, catching scope drift when the agent’s interpretation of a ticket didn’t match the intent.

Security surface awareness: Input validation, session handling, IDOR exposure, auth flows. Every surface the agent touches is a surface you check. Security isn’t a separate lane; it’s part of the verification pass.

Light DevOps awareness: Understanding the deploy pipeline enough to know whether a fix is in staging or production, whether a rollback is needed, whether the environment state is clean for the test.

Scrum-adjacent loop management: Prioritizing the ticket queue, managing parallel fix streams, keeping the PM informed of blocking issues without letting the loop stall.

That’s not a tester. That’s a QA engineer. And the AI dev team structure is what created the conditions for a junior QA to operate at that level, if they learn the workflow.

The Loop

The core cycle is simple. Understand it before you try to optimize it.

Bug found → Ticket filed → Agent picks up → Fix shipped → QA verifies → Comment posted → Ticket closedOn a good day, that loop closes in the same session. A bug found in the morning is verified and closed by afternoon. The agent doesn’t have competing priorities, doesn’t attend standups, doesn’t need context-setting meetings. When a ticket lands, it works the ticket.

Your job in the loop has three phases:

Before the fix: File the ticket correctly. This is where most of the work happens. A well-scoped ticket produces a targeted fix. A loose ticket produces a fix that might be right or might have drifted, and you won’t know until verification.

During the fix: Let the agent work. Don’t interrupt the loop with partial information. If you find something adjacent while the fix is in progress, park it. Don’t expand the ticket.

After the fix: Verify against acceptance criteria, check adjacent surfaces, post the verification comment, close or reopen. Clean and specific either way.

The loop works because it’s disciplined. Break the discipline and the speed becomes a liability. If you want a structured system for how this plays out across a full QA workflow, that’s covered in how to build a structured AI QA workflow.

Direct Fix Protocol

Here’s the power dynamic shift that changes everything about working in an AI dev team: the moment you find a bug or edge case, you can direct a fix immediately. No pushback. No “works on my machine.” No severity sparring. No waiting for the next sprint. You file it, the agent picks it up, and it works the problem.

That’s not a small thing if you’ve spent years watching legitimate bugs die in backlog because a developer didn’t agree with your severity call or didn’t have bandwidth until next cycle. In this loop, your severity call is the call. Your ticket is the brief. The agent executes. That shift in authority is also what makes discipline the core job security play in a hybrid QA role.

But that authority comes with a filing requirement. The agent will work whatever you give it. If the ticket is ambiguous, it will interpret, and its interpretation may not match your intent. If the scope is loose, it will fill the space, and the fill may break something adjacent. The direct fix protocol only works cleanly when the bug report earns it.

Bug Report Discipline for AI Agents

This is the core skill upgrade. Filing bug reports for an AI agent is different from filing for a human developer, and the difference matters. The fundamentals of writing effective bug reports still apply, but AI dev teams need additional structure on top of that baseline.

One surface per ticket. Always.

Never combine issues in a single ticket even if they appear related. The agent will attempt to resolve everything in the ticket scope simultaneously, which means fix paths can intersect and produce a mutation that’s harder to trace than the original bugs. File them separately. Link them as related if needed. Let the agent work them in sequence or parallel with clean scope on each.

One failure per report.

If you find three things wrong during a single verification pass, that’s three tickets. Not one ticket with a list. Three tickets, each with its own reproduction path, its own expected result, its own acceptance criteria.

Break out blockers and subtasks as child issues before the agent touches the parent.

If a fix has prerequisites (a data state that needs to be set up, another ticket that needs to close first, a dependency on a different surface) those go into linked child issues explicitly. Don’t leave them inline in the description. The agent reads the ticket as its full brief. If blockers are buried in the notes section, they may not get treated as blockers.

Priority order is explicit, not implied.

File tickets in priority sequence and label them clearly. The agent doesn’t always infer what should be fixed first from context. If ticket A is blocking ticket B, that relationship needs to be in the ticket structure, not assumed from the filing order.

Parallel fixes are possible, but only with tight scope.

You can have the agent working multiple tickets simultaneously. This is one of the genuine speed advantages of the AI dev team structure. But parallel fixes only stay clean if each ticket’s scope is narrow enough that the fix paths don’t intersect. If two tickets touch the same component, sequence them. Don’t run them in parallel and expect the agent to coordinate across sessions.

Bug Report Template for AI Dev Teams

Title: [Component/Screen]: [Specific failure description]

Environment:

- URL / screen / feature area

- Browser + version / device

- Account type / role / data state

- Build or branch if known

Steps to Reproduce:

1. [Exact step]

2. [Exact step]

3. [Step that triggers the failure]

Expected Result:

[Specific, observable. Not "it works." What should happen per spec or UX intent.]

Actual Result:

[What actually happened. Observable, no interpretation.]

Severity: [Critical / Major / Moderate / Minor]

Priority: [Urgent / High / Medium / Low]

Scope boundary:

[Explicit statement of what is IN scope for this fix and what is NOT.

Example: "Fix applies to the billing status label only. Do not refactor

the status component or touch the notification logic."]

Blockers / child issues:

[List linked tickets that must close first, or child issues broken out from this one.]

Evidence:

[Screenshot / recording / console log]

Notes:

[Intermittent? Role-specific? Related to prior fix? Workaround?]The scope boundary field is the one that doesn’t exist in standard bug report templates and matters most in AI dev team workflows. It explicitly tells the agent where the fix ends. Without it, you’re relying on the agent’s interpretation of scope, and that’s where drift happens.

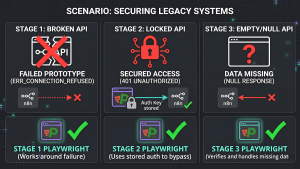

AI Worker Scoping: What Off-Rails Looks Like

Not all AI dev agents are properly scoped by default. This is something you learn by operating inside the loop, not from the documentation.

Off-rails behavior looks like:

- The agent fixes the stated issue but also refactors an adjacent component that wasn’t in the ticket

- The fix technically passes acceptance criteria but changes behavior in a way that wasn’t asked for

- The agent makes a design decision mid-fix that changes the UX of a surface you weren’t testing

- The fix introduces a new pattern inconsistent with the rest of the codebase because the agent decided to “improve” the implementation

None of this is malicious. It’s a scoping failure, either in the ticket, in the agent’s system prompt, or in both. Your job during verification is to check for intent drift, not just acceptance criteria pass/fail.

When you catch drift:

- Don’t try to salvage the fix. File it as a new ticket: “[Component]: Fix overstepped ticket scope, requires rollback and retarget.”

- Document exactly what changed that wasn’t in scope.

- Tighten the scope boundary field on the original ticket before refiling.

- Flag to PM if the same agent drifts consistently on a specific surface. That’s a prompting problem upstream, not a QA problem.

The verification pass for every ticket should include: did the fix do what the ticket asked, and only what the ticket asked.

Verification Comment Format

Every ticket gets a verification comment before it closes. This is non-negotiable. It’s the paper trail that makes the loop auditable, keeps the PM informed, and gives you a reference when the same surface comes up again next sprint.

VERIFICATION: [PASS / FAIL / PARTIAL]

Tested:

- [What was covered, specifically]

- [Account type / role used]

- [Browser / device / environment]

Adjacent surfaces checked:

- [Surface]: [clean / issue found, see ticket ID]

- [Surface]: [clean / not checked, reason]

Scope drift: [None observed] / [Yes, filed as ticket ID]

Mutations found: [None] / [Yes, filed as ticket ID]

Acceptance criteria: [Met] / [Not met, see below]

[If FAIL or PARTIAL:]

Failure description:

[Exact reproduction of what failed. Steps, observed result,

expected result. Paste-ready for the agent to reopen and rework.]A clean PASS comment closes the ticket. A FAIL comment reopens it with everything the agent needs to fix it on the next pass without a back-and-forth.

What AI Misses: The Human Coverage Layer

The agent fixes what you point at. It doesn’t ask what’s adjacent. Understanding the failure patterns makes you faster at knowing where to look. This is where AI-assisted manual testing and human verification genuinely diverge in practice.

Mutations are the most consistent issue. A fix to one component breaks something on a different screen because the agent patched in isolation and didn’t check downstream. UI layout drifts after a content update. A state transition stops working correctly after what should have been a contained fix. You catch mutations by checking adjacent surfaces on every ticket, not just the surface that was fixed.

Context failures are the more consequential miss. The agent doesn’t know that a paid item and an overdue item look visually identical in the current UI and that this will cause a real user to panic. It doesn’t know which flows are emotionally high-stakes for users. It doesn’t have the product intuition to flag that two technically distinct states are functionally indistinguishable at a glance. That’s not a code problem. It’s a product problem, and it only surfaces when a human who understands the user’s context actually uses the product.

Copy drift is quieter but consistent. The agent updates a label in the component but doesn’t check whether that label appears in onboarding copy, tooltip text, or error messages that still reference the old term. The code is correct. The product is inconsistent. You catch this by reading the product as a user reads it, not as a developer auditing a diff.

Security surfaces don’t get checked unless you check them. The agent doesn’t run input validation tests after a form update. It doesn’t verify that session handling is still correct after an auth-adjacent fix. If you’re not running a security surface check as part of your verification pass on relevant tickets, nobody is. The full breakdown of what security testing looks like for QA engineers is worth keeping in your back pocket for every engagement.

Parking Lot Discipline

When you find something adjacent during a verification pass, something that’s broken but wasn’t in the scope of the ticket you’re verifying, it goes in the parking lot. Not into the current ticket. Not into a comment that says “also noticed this.” Into a new ticket, filed immediately, linked to the current one as related.

This is how you keep the loop clean. The current ticket closes on its own scope. The new finding gets its own ticket with its own scope boundary and its own priority call. The agent never has to figure out which thing to fix first in an overloaded ticket. You maintain a clear audit trail of what was found when and what was done about it.

The parking lot is not a backlog. Items that go into the parking lot get filed and prioritized in the same session where they were found. If it’s critical, it goes straight to the active queue. If it’s moderate, it gets filed and slotted by priority. If it’s minor, it gets filed with low priority and doesn’t block the current loop.

Takeaway

The QA engineer who learns this workflow doesn’t just get faster. They get bigger. The hybrid AI QA role isn’t a diminished version of the job. It’s the job with the training wheels of “wait for a developer to have bandwidth” removed.

Junior QAs who learn to work this loop correctly operate with the leverage of a QA lead. They make severity calls that stick. They file tickets an AI agent can action without ambiguity. They catch mutations, context failures, and scope drift that no automation catches. They manage a parallel fix stream without creating regression cascades. They run security surface checks as part of the standard verification pass, not as a separate engagement.

That’s not a tester anymore. That’s a full QA engineer, and the AI dev team structure is what created the conditions for that upgrade. If you want to take it further, the AI QA team multi-model approach explores what this looks like when you stack multiple AI roles in the same workflow. And if you want to understand the full team structure from the product side, the breakdown of what a real AI-human dev team actually looks like covers how the QA role fits into the broader operation. The only question is whether you learn the workflow or keep waiting for someone to explain why your severity calls got overruled again.